This article was first published as a guest post for the Lean Systems Society, March 2013

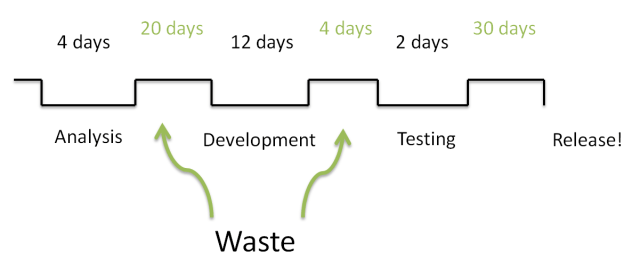

When I was first taught about value streams, they looked a lot like this:

The idea is that you look at the different activities through which the work flows, and the gaps between them, then narrow the gaps, because waiting around for things to happen is wasteful.

We’re used to thinking of software in terms of phases. We talk about analysis, design, implementation, testing and production. If we’re feeling more Agile and our teams are more collaborative, we might talk about work that’s ready, in progress, and done.

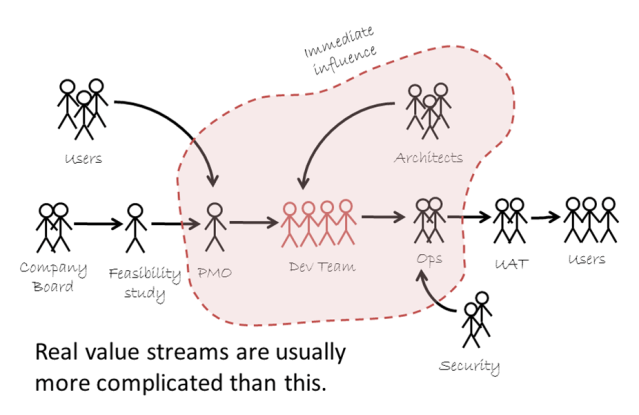

As companies get larger, the value streams tend to get more complicated, but we still talk about our software in terms of those original phases. Those are the ones we see every day on our Scrum or Kanban boards. We don’t always notice the things that happen outside the team… and it’s often there that the magic happens.

In software development, work is more like product development – designing a new car – than like a production line where we churn the same thing out over and over again. The length between phases often changes. Our biggest constraints are often not the length of time it takes to process “work”, but the length of time it takes to get feedback and learn from that feedback. Our Kanban boards signal the impediment of flow of work, but that also means an impediment to feedback; this is why it works. Reducing the length of the value stream gives us feedback on the whole of our product.

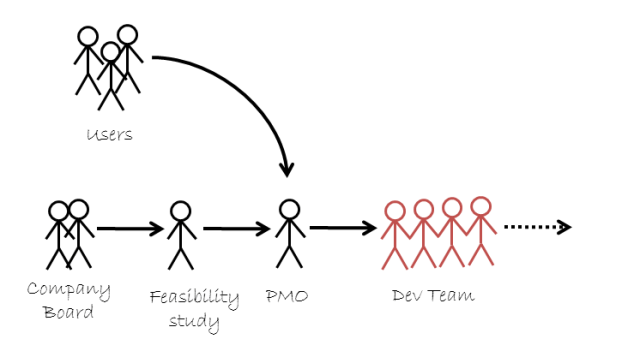

Outside of the development team, the gaps between phases can be quite small. Other ways to shrink the stream might involve eliminating phases entirely, or minimising the work that needs to be done, rather than worrying about the gaps between them. One of the tricks I’ve learnt with value streams is to stop asking what happens to work, and to start asking who it happens with. Who wants this in the first place? Who’s providing the budget or deciding that this is high priority? Who will give you feedback? Whose feedback might end up being “no”?

So the development team get their requirements. Who do they get them from? Is it the users? An analyst? Are they able to just go off, get the requirements and work, or is there some kind of scope control, and who applies that? Ah, there’s a funding body! And a feasibility study group… and another funding body for the feasibility study. The things we discover!

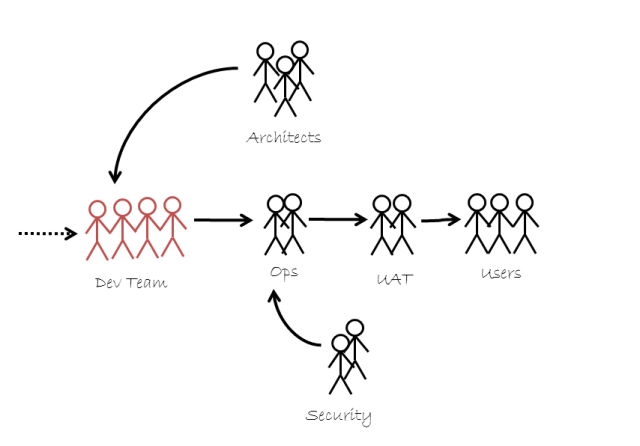

Going out from the team the other way, we start finding what happens before code will be used. Who wants to test this before it goes to real users? Who needs to certify the app before it hits the store? Who is actually going to do the release, and what do they need? Who needs to tell the users so they actually start using the code?

At this point all kinds of gatekeeping stakeholders can also appear; people who will not let the code be used, or who won’t give their support, unless their needs are met. The support team need monitoring and logging to be in place. The architecture team may have performance requirements or maintainability and changeability requirements. Security, legal and audit groups pick the release apart. Sometimes it’s as simple as just telling the marketing department, but even that can be forgotten in the relief that comes with actually being finished (except you’re not).

As we start tracking down the individual groups, we can start seeing that the value stream for much of our software is in fact a value watershed, complete with tributaries, dams, a vast delta to get bogged down in, and even the occasional oxbow lake, cut off and isolated as soon as the river floods.

Now we can start looking at how long it takes to get the information and feedback we need from the different groups. In some cases, we may discover that nobody has ever engaged the group at all. Our non-functional requirements were little more than a note saying, “Remember performance!”, and our security requirements derived from some years-old story that’s still retold around the water cooler in hushed voices. Yet there’s still someone out there who knows the real story, or has the real figures. The best development teams, to them, are the ones that help them sleep soundly at night.

For over a decade now, we’ve known that getting testers involved early on, before the code is written, can help to improve the quality of the code as well as discovering some of those annoying scenarios that we wouldn’t otherwise consider. Acceptance Test-Driven Development (ATDD) and Behaviour-Driven Development (BDD) were both conceived along these lines. But what’s to stop us from getting any stakeholder involved? Why shouldn’t we talk to every gatekeeping stakeholder and turn that person or group into an educator instead?

If we were to do that for every stakeholder, it would result in even more analysis. We’d be moving the effort from one side of the stream to the other, rather than reducing the length of the stream. We know that doing a vast amount of analysis up-front doesn’t always help.

So how do we shrink the value stream from here?

In many cases, the stakeholder requirements are already so well known that we don’t need to talk to them. We use Active Directory for logging in. We know to avoid SQL injection attacks. Our performance requirements are minimal; we’re pretty sure our code will meet them. In these cases, letting stakeholders test afterwards – often in parallel – is the best thing to do. In others, providing proof may be enough – through performance metrics, or code complexity metrics, or continuous review, for instance. It might lengthen the development phase, but it’s usually worth it for the faster feedback and the elimination of later testing phases.

This is often referred to, vaguely, as “baking quality in”, though when I talk to development teams they often have little idea what “quality” means, or how to measure it, or who cares. Mapping the value stream with people can change that.

We’ll also be engaging new stakeholders, or meeting new requirements for existing stakeholders. A question I like to ask is, “What’s different about this project? What will it let the company do that they’ve never done before?” This is Cynefin’s complex domain; a place where outcomes emerge, rather than being predicted, and in which feedback is absolutely crucial.

By engaging these stakeholders earlier, getting their feedback on prototypes and in showcases, we can often eliminate a large bulk of the testing that needs to happen afterwards. Their testing phases merge with that of the development team. Since this is the space in which most discoveries will be made, it’s also a great place to work out if projects should go ahead at all (beware of any consultants or coaches who “guarantee success” with their approach!) and to deliberately pivot, Lean Start-Up style, if the original assumptions around feasibility or usefulness turn out to be wrong.

Now that we know where feedback is needed most, we can use our value stream the traditional way. We can look for gaps in the stream where we’re waiting for people, and see if we can free them up to respond more quickly. We can look for anything which creates large batches, and see if we can get agreement to make those batches smaller by reducing transaction cost (incremental funding can be a big win).

Another question I often ask my clients is, “Where does your influence end?” This helps us see where quick wins can be made, and where we may need to start pushing for change outside that sphere. For those of us who aren’t always engaged at the C*O level, that awareness can help to focus conversations… and can start to give us a value stream for change programs themselves.

Development teams who consider the people in their value stream usually invite the right people to showcases. They have a better awareness of scope, and risk, and tend to do the newer, riskier pieces of work first. Their story cards tend not to start with “As a user…”, but instead, “As Mark from the Security Audit team…” And they speak not just the language of the department who asked for the application, but the language of the whole business, carrying that language into their code too.

One of our tenets in Lean is, “Respect for People”. I’m increasingly coming to believe that this isn’t as much a pillar of Lean as one of its most fundamental tests. If we do it right, people are respected – not because we force ourselves to behave a certain way, but because a good system leads us, inevitably, to take their needs and frustrations into account.

Our value stream is made of people. Let’s find out who.