A couple of weeks ago, I tweeted a paraphrase of something that David J. Anderson said at the London Lean Kanban Day: “Probabalistic forecasting will outperform estimation every time”. I added the conference hashtag, and, perhaps most controversially, the #NoEstimates one.

The conversation blew up, as conversations on Twitter are wont to do, with a number of people, perhaps better schooled in mathematics than I am, claiming that the tweet was ridiculous and meaningless. “Forecasting is a type of estimation!” they said. “You’re saying that estimation is better than estimation!”

That might be true in mathematics. Is it true in ordinary, everyday English? Apparently, so various arguments go, the way we’re using that #NoEstimates hashtag is confusing to newcomers and making people think we don’t do any estimation at all!

So I wanted to look at what we actually mean by “estimate”, when we’re using it in this context, and compare it to the “probabilistic forecasting” of David’s talk.

Defining “Estimate” in English

While it might be true that a probabilistic forecast is a type of estimate in maths and statistics, the commonly used English definitions are very different. Here’s what Wikipedia says about estimation:

Estimation (or estimating) is the process of finding an estimate, or approximation, which is a value that is usable for some purpose even if input data may be incomplete, uncertain, or unstable.

And here’s what it says about probabilistic forecasting:

Probabilistic forecasting summarises what is known, or opinions about, future events. In contrast to a single-valued forecasts … probabilistic forecasts assign a probability to each of a number of different outcomes, and the complete set of probabilities represents a probability forecast.

So an estimate is usually a single value, and a probabilistic forecast is a range.

Another way of phrasing that tweet might have been, “Providing a range of outcomes along with the likelihood of those outcomes will lead to better decision-making than providing a single value, every time.”

And that might have been enough to justify David’s assertion on its own… but it gets worse.

Defining “Estimate” in Agile Software Development

In the context of Software Development, estimation has all kinds of horrible connotations. It turns out that Wikipedia has a page on Software Development Estimation too! And here’s what it says:

Software development effort estimation is the process of predicting the most realistic amount of effort (expressed in terms of person-hours or money) required to develop or maintain software based on incomplete, uncertain and noisy input.

Again, we’re looking at a single value; but do notice the “high uncertainty” there. Here’s what the page says later on:

Published surveys on estimation practice suggest that expert estimation is the dominant strategy when estimating software development effort.

The Lean / Kanban movement has emerged (and possibly diverged) from the Agile movement, in which this strategy really is dominant, mostly thanks to Scrum and Extreme Programming. Both of these suggest the use of story points and velocity to create the estimates. The idea of this is that you can then use previous data to provide a forecast; but again, that forecast is largely based on a single value. It isn’t probabilistic.

Then, too, the “expertise” of the various people performing the estimates can often be questionable. Scrum suggests that the whole team should estimate, while XP suggests that developers sign up to do the tasks, then estimate their own. XP, at least, provides some guidance for keeping the cost of change low, meaning that expertise remains relevant and velocity can be approximated from the velocity of previous sprints. I’d love to say that most Scrum teams are doing XP’s engineering practices for this reason, but a lot of them have some way to go.

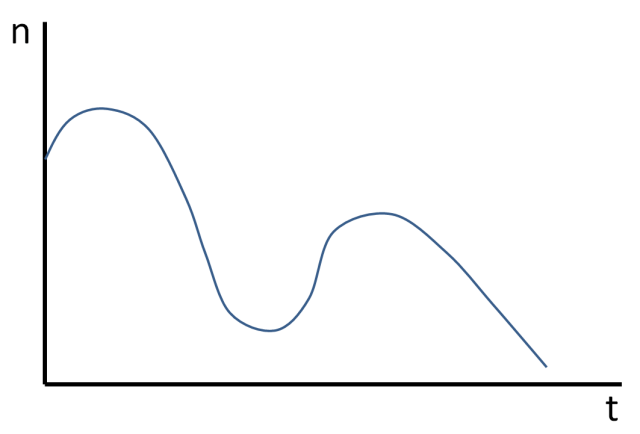

I have a rough and ready scale that I use for estimating uncertainty, that helps me work out whether an estimate is even likely to be made based on expertise. I use it to help me make decisions about whether to plan at all, or whether to give something a go and create a prototype or spike. Sometimes a whole project can be based on one small idea or piece of work that’s completely new and unproven, the effort of which can’t even be estimated using expertise (because there isn’t any), let alone historical metrics.

Even when we have expertise, the tendency is for experts to remember the mode, rather than the mean or median value. Since we often make discoveries that slow us down but rarely make discoveries which speed us up, we are almost inevitably over-optimistic. Our expertise is not merely inaccurate; it’s biased and therefore misleading. Decisions made on the basis of expert estimates have a horrible tendency to be wrong. Fortunately everyone knows this, so they include buffers. Unfortunately, work tends to expand to fill the time available… but at least that makes the estimates more accurate, right?

One of the people involved in the Twitter conversation suggested we should be using the word “guess” rather than “estimate”. And indeed, that might be mathematically more precise, and indeed, if we called them that, people might be looking for different ways to inform the decisions we need to make.

But they don’t. They’re called “estimates” in Scrum, in XP, and by just about everyone in Agile software development.

But it gets worse.

Defining “Estimate” in the context of #NoEstimates

Woody Zuill found this very early tweet from Aslak Hellesøy using the #NoEstimates hashtag, possibly the first:

@obie at #speakerconf: “Velocity is important for budgeting”. Disagree. Measuring cycle time is a richer metric. #kanban #noestimates

So the movement started with this concept of “estimate” as the familiar term from Scrum and XP. Twitter being what it is, it’s impossible to explain all the context of a concept in 140 characters, so a certain level of familiarity with the ideas around that tag is assumed. I would hope that newcomers to a movement would approach it with curiosity, and hopefully this post will make that easier.

Woody confessed to being one of the early proponents of the hashtag in the context of software development. In his post on the #NoEstimates hashtag, he defines it as:

#NoEstimates is a hashtag for the topic of exploring alternatives to estimates [of time, effort, cost] for making decisions in software development. That is, ways to make decisions with “No Estimates”.

And later:

It’s important to ask ourselves questions such as: Do we really need estimates? Are they really that important? Are there options? Are there other ways to do things? Are there BETTER ways to do thing? (sic)

Woody, and Neil Killick who is another proponent, both question the need for estimates in many of the decisions made in a lot of projects.

I can remember getting the Guardian’s galleries ready in time for the Oscars. Why on earth were we estimating how long things would take? That was time much better spent in retrospect on getting as many of the features complete as we could. Nobody was going to move the Oscars for us, and the safety buffer we’d decided on to make sure that everything was fully tested wasn’t changing in a hurry, either. And yet, there we were, mindlessly putting points on cards. We got enough features out in time, of course, as well as some fun extras… but I wonder if the Guardian, now far more advanced in their ability to deliver than they were in my day, still spend as much time in those meetings as we used to.

I can remember asking one project manager at a different client, “These are estimates, right? Not promises,” and getting the response, “Don’t let the business hear you say that!” The reaction to failing to deliver something to the agreed estimates was to simply get the developers to work overtime, and the reaction to that was, of course, to pad the estimates. There are a lot of posts around on the perils of estimation and estimation anti-patterns.

Even when the estimates were made in terms of time, rather than story points, I can remember decisions being unchanged in the face of the “guesses”. There was too much inertia. If that’s going to be the case, I’d rather spend my time getting work done instead of worrying about the oxymoron of “accurate estimates”.

That’s my rant finished. Woody and Neil have many more examples of decisions that are often best made with alternatives to time estimation, including much kinder, less Machiavellian ones such as trade-off and prioritization.

In that post above, Neil talks about “using empiricism over guesswork”. He regularly refers to “estimates (guesses)”, calling out the fact that we do use that terminology loosely. That’s English for you; we don’t have an authoritiative body which keeps control of definitions, so meanings change over time. For instance, the word “nice” used to mean “precise”, and before that it meant “silly”. It’s almost as if we’ve come full circle.

Defining “Definition”

Wikipedia has a page on definition itself, which points out that definitions in mathematics are different to the way I’ve used that term here:

In mathematics, a definition is used to give a precise meaning to a new term, instead of describing a pre-existing term.

I imagine this refers to “define y to be x + 2,” or similar, but just in case it’s not clear already: the #NoEstimates movement is not using the mathematical definition of “estimate”. (In fact, I’m pretty sure it’s not using the mathematical definition of “no”, either.)

We’re just trying to describe some terms, and the way they’re used, and point people at alternatives and better ways of doing things.

Defining Probabilistic Forecasting

I could describe the term, but sometimes, descriptions are better served with examples, and Troy Magennis has done a far better job of this than I ever have. If you haven’t seen his work, this is a really good starting point. In a nutshell, it says, “Use data,” and, “You don’t need very much data.”

I imagine that when David’s talk is released, that’d be a pretty good thing to watch, too.

Back in Greek mythology, there was a dog called Cerberus. It guarded the gate to the underworld, and it had three heads.

Back in Greek mythology, there was a dog called Cerberus. It guarded the gate to the underworld, and it had three heads.